New Product Forecasting Using Deep Learning – A Unique Way

Background

Forecasting demand for new product launches has been a major challenge for industries and cost of error has been high. Under predict demand and you lose on potential sales, overpredict them and there is excess inventory to take care of. Multiple research suggests that new product contributes to one-third of the organization sales across the various industry. Industries like Apparel Retailer or Gaming thrive on new launches and innovation, and this number can easily inflate to as high as 70%. Hence accuracy of demand forecasts has been a top priority for marketers and inventory planning teams.

There are a whole lot of analytics techniques adopted by analysts and decision scientists to better forecast potential demand, the popular ones being:

- Market Test Methods – Delphi/Survey based exercise

- Diffusion modeling

- Conjoint & Regression based look alike models

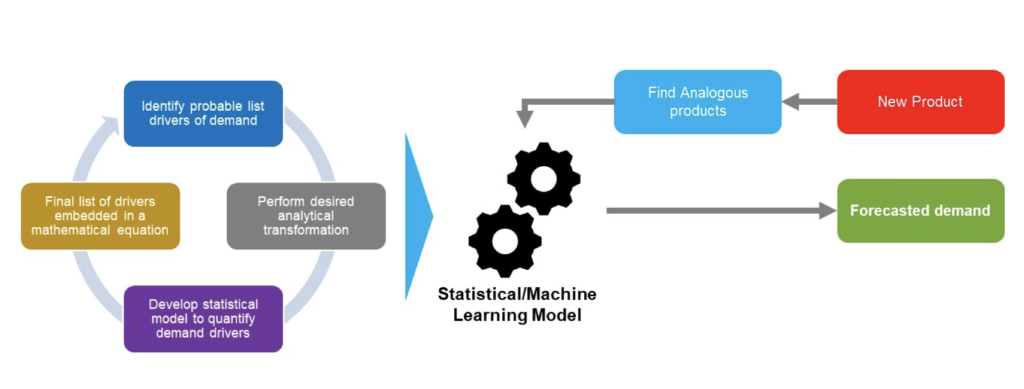

While Market Test Methods are still popular but they need a lot of domain expertise and cost intensive processes to drive desired results. In recent times, techniques like Conjoint and Regression based methods are more frequently leveraged by marketers and data scientists. A typical demand forecasting process for the same is highlighted below:

Though the process implements an analytical temper of quantifying cross synergies between business drivers and is scalable enough to generate dynamic business scenarios on the go, it falls short of expectations on following two aspects

- It includes heuristics exercise of identifying analogous products by manually defining product similarity. Besides, the robustness of this exercise is influenced by domain expertise. The manual process coupled with subjectivity of the process might lead to questionable accuracy standards.

- It is still a supervised model and the key demand drivers need manual tuning to generate better forecasting accuracy.

For retailers and manufacturers esp. apparel, food, etc. where the rate of innovation is high and assortments keep refreshing from season to season, a heuristic method would lead to high cost and error for any demand forecasting exercise.

With the advent of Deep Learning’s Image processing capabilities, the heuristic method of identifying feature similarity can be automated with a high degree of accuracy through techniques like Convoluted Neural Network (CNN). It also minimizes the need for domain expertise as it self-learns feature similarity without much supervision. Since the primary reason for including product features in demand forecasting model is to understand the cognitive influence on customer purchase behavior, a deep learning based approach can capture the same with much higher accuracies. Besides techniques like Recurrent Neural Network (RNN) can be employed to make the models better at adaptive learning and hence making the system self-reliant with negligible manual interventions.

“Since the primary reason of including product features in demand forecasting model is to understand cognitive influence on customer purchase behavior, a deep learning framework is a better and accurate approach to capture the same”

In practice, CNN and RNN are two distinct methodologies and this article highlights a case where various Deep Learning models were combined to develop a self-learning demand forecasting framework.

Case Background

An apparel retailer wanted to forecast demand for its newly launched “Footwear” styles across various lifecycle stages. The current forecasting engine implemented various supervised techniques which were ensemble to generate desired demand forecasting. It had 2 major shortcomings:

- The analogous product selection mechanism was heuristic and lead to low accuracy level in downstream processes.

- The heuristic exercise was a significant road block in evolving the current process to a scalable architecture, making the overall experience a cost intensive one.

- The engine was not able to replicate the product life cycle accurately.

Proposed Solution

We proposed to tackle the problem through an intelligent, automated and scalable framework

- Leverage Convoluted Neural Networks(CNN) to facilitate the process of identifying the analogous product. CNN techniques have been proven to generate high accuracies in image matching problems.

- Leverage Recurrent Neural Networks (RNN) to better replicate product lifecycle stages. Since RNN memory layers are better predictors of next likely event, it is an apt tool to evaluate upcoming time-based performances.

- Since the objective was to devise a scalable method, a cloud-ready easy to use UI was proposed, where user can upload the image of an upcoming style and the demand forecasts would be generated instantly.

Overall Approach

The entire framework was developed in Python using Deep Learning platforms like Tensor Flow with an interactive user interface powered by Django. The Deep Learning systems were supported through NVIDIA GPUs hosted on Google Cloud.

The demand prediction framework consists of following components to ensure an end to end analytical implementation and consumption.

1. Product Similarity Engine

An image classification algorithm was developed by leveraging Deep Learning techniques like Convolution Neural Networks. The process included:

Data Collation

- Developed an Image bank consisting of multi-style shoes across all categories/sub-categories e.g. sports, fashion, formals etc.

- Included multiple alignments of the shoe images.

Data Cleaning and Standardization

- Removed duplicate images.

- Standardized the image to a desired format and size.

Define High-Level Features

- Few key features were defined like brands, sub-category, shoe design – color, heel etc.

Image Matching Outcomes

- Implemented a CNN model with 5+ hidden layers.

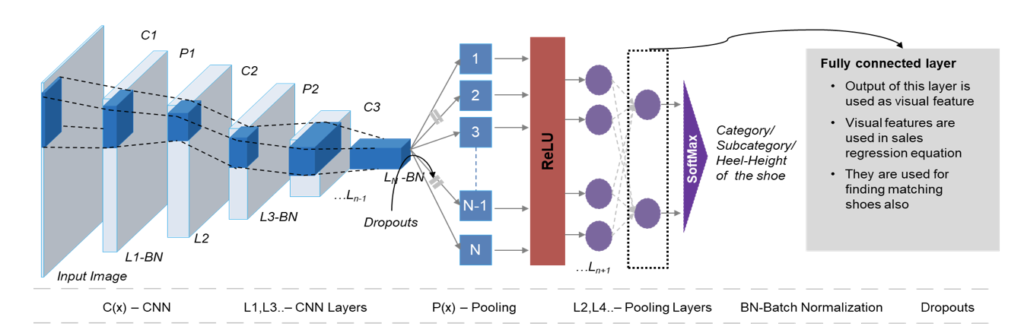

The following image is an illustrative representation of the CNN architecture implemented

- Input Image: holds raw pixel values of the image with features being width, height & RGB values.

- Convolution: Conv Net is to extract features from input data. Formation of matrix by sliding filters over an image and computing dot product is called “Feature Map”.

- Non-Linearity – RELU: This layer applies element-wise activation filter leveraged to stimulate non-linearity relationships in a standard ANN.

- Pooling: Reduces the dimensionality of each feature map and retains important information. Helps in arriving at a scale invariant representation of an image.

- Dropouts: To prevent overfitting random connections are severed.

- SoftMax Layer: Output layer that classifies the image to appropriate category/subcategory/heel height classes.

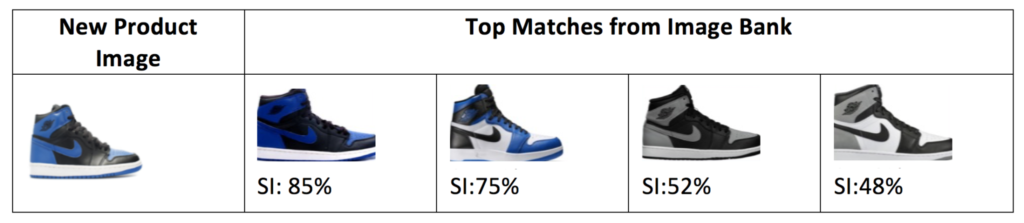

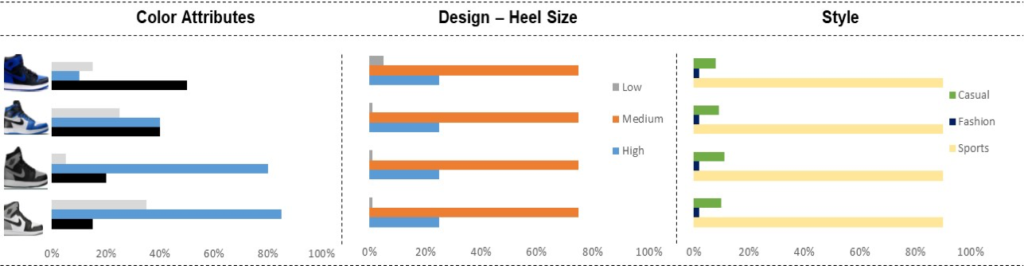

Identified Top N matching shoes and calculated their probability scores. Classified image orientation as top, side (right/left) alignment of the same image:

Similarity Index- Calculated based on the normalized overall probability scores.

Analogous Product: Attribute Similarity Snapshot (Sample Attributes Highlighted)

2. Forecasting Engine

A demand forecasting engine was developed on the available data by evaluating various factors like:

- Promotions – Discounts, Markdown

- Pricing changes

- Seasonality – Holiday sales

- Average customer rating

- Product Attributes – This was sourced from the CNN exercise highlighted in the previous step

- Product Lifecycle – High sales in initial weeks followed by declining trend

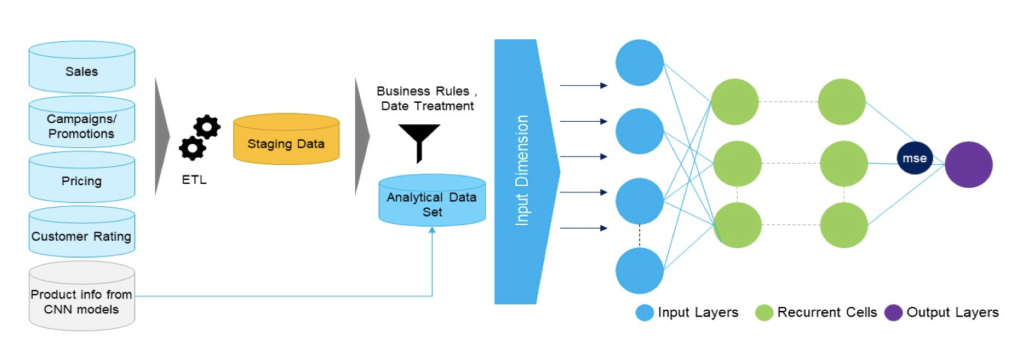

The following image is an illustrative representation of the demand forecasting model based on RNN architecture.

The RNN implementation was done using Keras Sequential model and the loss function was estimated using “mean squared error” method.

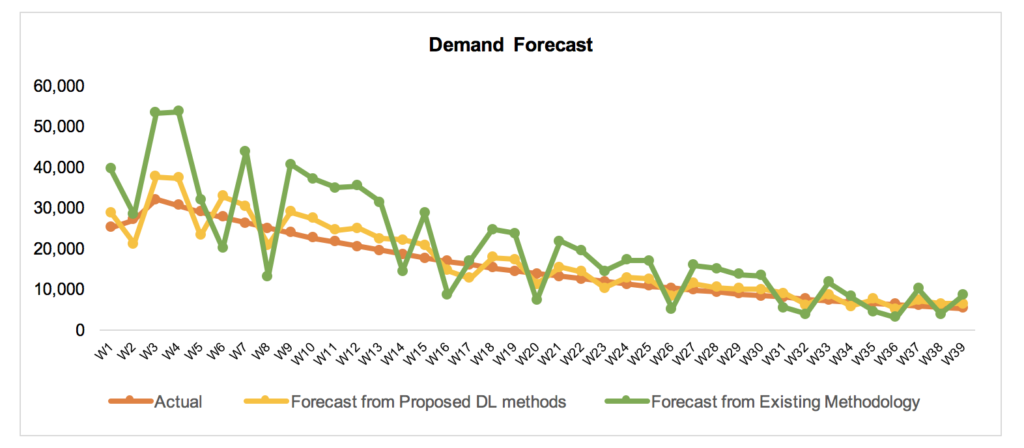

Demand Forecast Outcome

The accuracy from the proposed Deep Learning framework was in the range of 85-90% which was an improvement on the existing methodology of 60-65%.

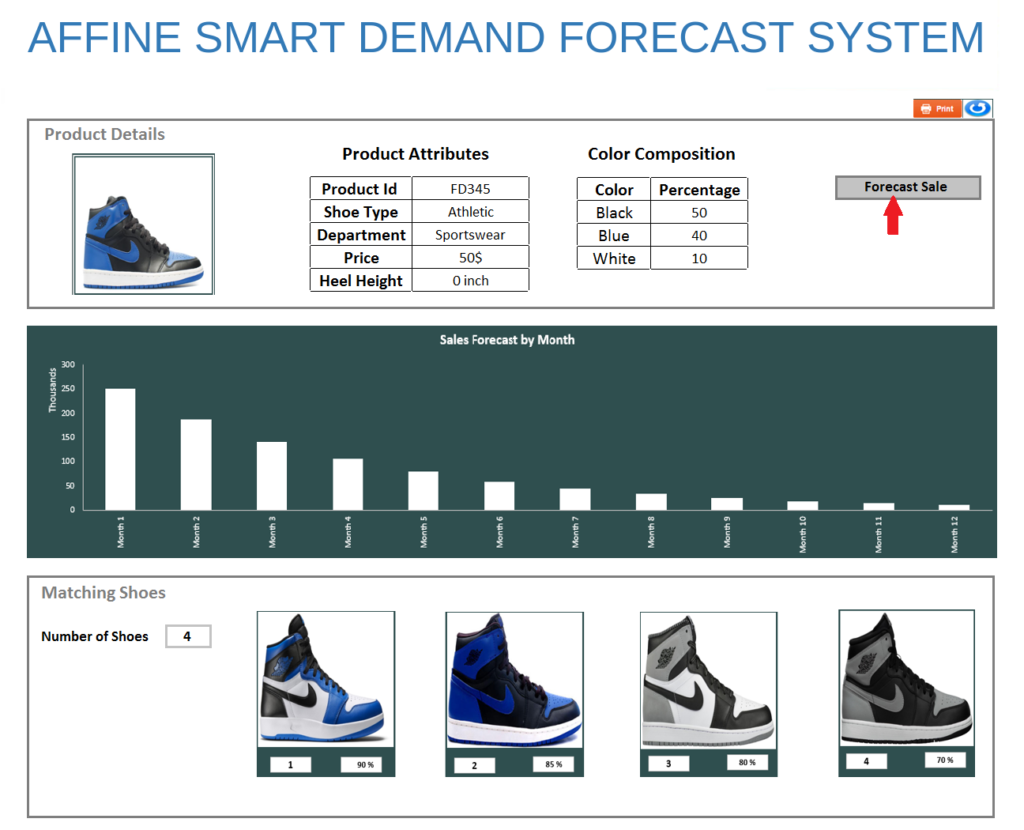

Web UI for Analytical Consumption

An illustrative snapshot is highlighted below:

Benefits and Impact

- Higher accuracy through better learning of the product lifecycle.

- The overall process is self-learning and hence can be scaled quickly.

- Automation of decision intensive processes like analogous product selection led to reduction in execution time.

- Long-term cost benefits are higher.

Key Challenges & Opportunities

- The image matching process requires huge data to train.

- The feature selection method can be an automated through unsupervised techniques like Deep Auto Encoders which will further improve scalability.

- Managing image data is a cost intensive process but it can be rationalized over time.

- The process accuracies can be improved by creating a deeper architecture of the network and an additional one-time investment of GPU configurations.